In August 2025, the research paper titled “OMR-Net+: a frequency-aware feature refinement and entropy modeling method for efficient screen content image compression”, co-authored by the project participants – Prof. Hui Yuan, Dr Xin Lu, and others from Shandong University, in collaboration with Prof. Junyan Huo from Xidian University, China, was accepted for publication in IEEE Signal Processing Letters.

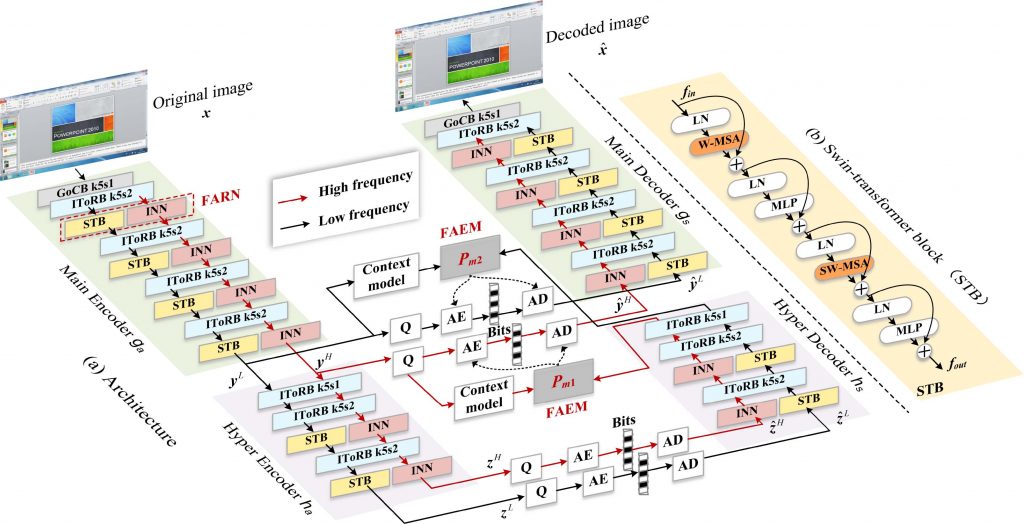

This paper focuses on Screen content image (SCI) compression, addressing two key issues that the existing learned image compression methods are facing: 1) insufficient frequency-aware processing, and 2) suboptimal entropy modelling for mixed-frequency components. we propose OMR-Net+ (Figure 1), a novel SCI compression method that incorporates frequency-aware feature characteristics, including a frequency-aware refinement network (FARN) and a frequency-aware entropy model (FAEM). The proposed FARN uses an invertible neural network to preserve critical high-frequency details and a transformer-based model to reduce redundancy in low-frequency features. Additionally, the proposed FAEM provides tailored conditional probability estimation based on a parallel context model for high- and low-frequency features, respectively, to improve both coding performance and computational efficiency.

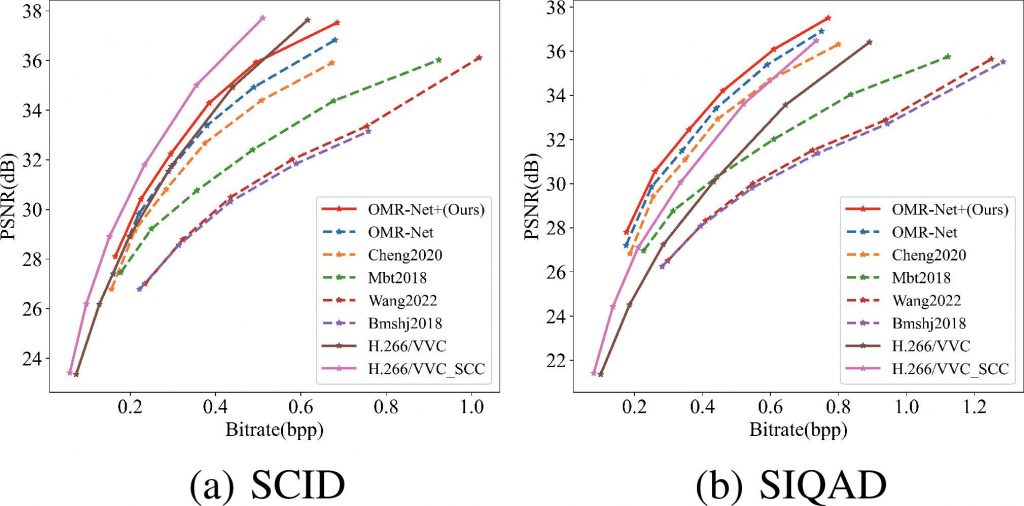

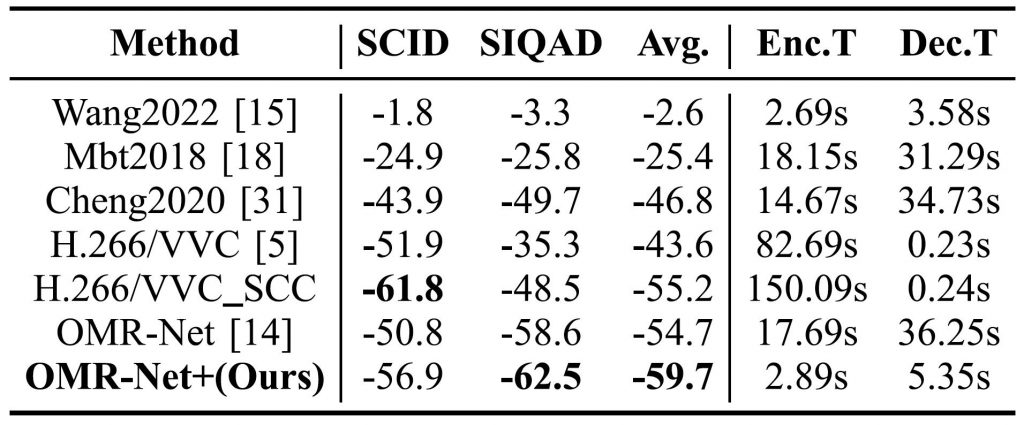

Experimental results show that OMR-Net+ significantly outperforms the state-of-the-art methods in rate-distortion performance, demonstrating its potential for efficient SCI compression. Specifically, We compared the proposed OMR-Net+ with H.266/VVC (VTM19.0), H.266/VVC-SCC [1], and state-of-the-art LIC methods implemented with CompressAI [2], including OMRNet [3], Cheng et al. [4], Mbt2018 [5], Bmshj2018 [6], and Wang et al. [7]. As shown in Figure 2 and Figure 3, OMR-Net+ outperforms other LIC methods. Compared to H.266/VVC, OMR-Net+ achieves superior performance on the SIQAD but underperforms on SCID, indicating that OMR-Net+ is particularly effective for SCI rich in text and graphical elements, as seen in SIQAD.

Reference:

[1] B. Bross et al., “Overview of the versatile video coding (VVC) standard and its applications,” IEEE Trans. Circuits Syst. Video Technol., vol. 31, no. 10, pp. 3736–3764, Oct. 2021.

[2] J. Bégaint, F. Racapé, S. Feltman, and A. Pushparaja, “CompressAI: a pytorch library and evaluation platform for end-to-end compression research,” 2020. [Online]. Available: https://github.com/InterDigitalInc/CompressAI/

[3] S. Jiang, T. Ren, C. Fu, S. Li, and H. Yuan, “OMR-Net: a two-stage octave multi-scale residual network for screen content image compression,” IEEE Signal Process. Lett., vol. 31, pp. 1800–1804, 2024.

[4] Z. Cheng, H. Sun, M. Takeuchi, and J. Katto, “Learned image compression with discretized Gaussian mixture likelihoods and attention modules,” in Proc. IEEE/CVF Conf. Comput. Vis. Pattern Recognit., 2020, pp. 7939–7948.

[5] D. Minnen, J. Ballé, and G. D. Toderici, “Joint autoregressive and hierarchical priors for learned image compression,” in Proc. Adv. Neural Inf. Process. Syst., 2018, vol. 31, pp. 10794–10803.

[6] J. Ballé, D. Minnen, S. Singh, S. J. Hwang, and N. Johnston, “Variational image compression with a scale hyperprior,” in Proc. Int. Conf. Learn. Representations, 2018, pp. 1–13.

[7] M. Wang et al., “Transform skip inspired end-to-end compression for screen content image,” in Proc. IEEE Int. Conf. Image Process., 2022, pp. 3848–3852.